The AI Coding Trust Gap: Why 84% of Devs Use It But Only 29% Trust What Ships

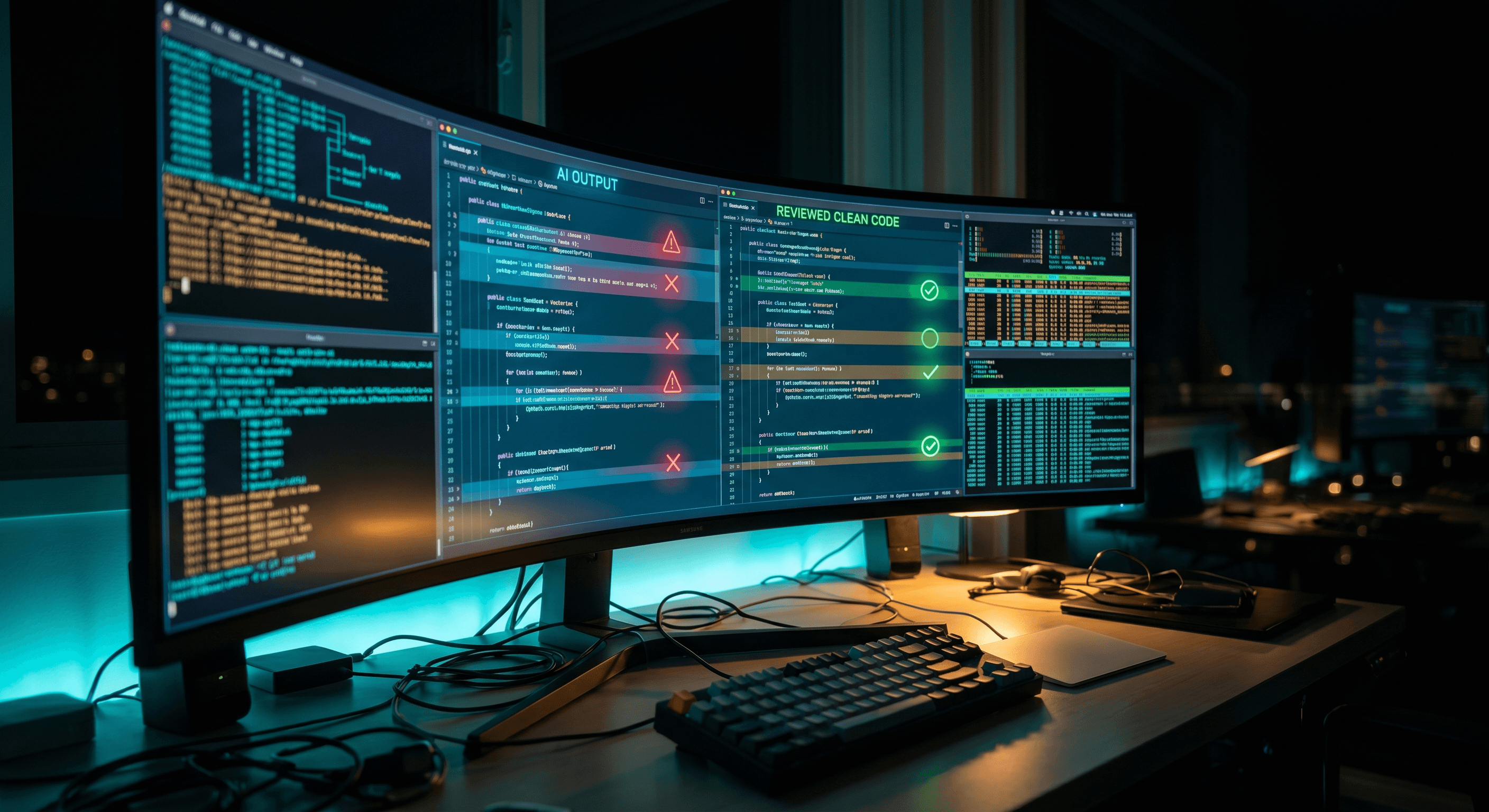

New data shows 84% of developers use AI coding tools, but only 29% trust the output in production. The gap is not a tools problem — it is a code review culture problem.

The AI Coding Trust Gap: Why 84% of Devs Use It But Only 29% Trust What Ships

84% of developers now use AI coding tools. Only 29% trust what actually ships.

That 55-point gap is the most important number in engineering right now, and most CTOs are ignoring it.

A new survey tracking AI adoption across engineering teams in April 2026 surfaced this split — and it tells you everything about where the real problem is. Teams are not failing to adopt AI. They are failing to build the review infrastructure that makes AI output safe to ship.

The Gap Is Not About the Tools

When you dig into why developers do not trust AI-generated code, the answers are not "the model hallucinated" or "the output was bad." They are:

- No clear review process before code hits staging

- AI output goes straight into PRs without a quality pass

- Team leads cannot tell the difference between human-written and AI-written code at review time

The tooling works. Cursor, Claude, GitHub Copilot — these models are generating code that looks correct, compiles, and even passes basic tests. The failure point is what happens between generation and deployment.

Most engineering teams optimized for output speed. Nobody built the review layer to match.

What the 29% Are Actually Doing Right

After running overseas teams where every developer is AI-native by default, I have seen what the confident teams do differently. It comes down to three practices:

1. Treat AI Output Like a Fast Junior Dev's PR

The mental model shift that changes everything: AI is not an expert system you can trust blindly. It is a prolific junior developer that ships volume, misses edge cases, and needs a senior review before anything touches prod.

Engineers who internalize this stop vibing their way to a demo and start asking the same questions they would ask a junior's PR: Does this handle failure states? Are there race conditions? What happens when the input is malformed?

2. Gate AI Code at the PR Level With a Prompt Review Checklist

Here is the exact skill file several teams I work with have implemented in Cursor:

# .cursor/skills/ai-pr-review.md

## AI Code Review Gate

Before approving any AI-generated code, confirm:

### Security

- [ ] No hardcoded secrets or API keys

- [ ] Input validation on all user-supplied data

- [ ] Auth checks are not bypassable via edge cases

### Correctness

- [ ] Error states are handled (not just happy path)

- [ ] Async operations have proper await/error handling

- [ ] Data types match the interface contracts

### Maintainability

- [ ] Variable names are meaningful, not auto-generated noise

- [ ] Complex logic has inline comments explaining why, not what

- [ ] No dead code, no unused imports

### Test Coverage

- [ ] At minimum one test covers the primary path

- [ ] Edge cases from the checklist above have test coverage

## Review Prompt (paste into Claude before approving)

Review this diff for: security vulnerabilities, missing error handling,

type safety violations, and anything a senior engineer would flag in code review.

List each issue with severity (critical / warning / note).

This takes two minutes per PR. It catches the categories of problems that cause 3 AM incidents.

3. Build a "Code Origin" Signal Into Your Workflow

Teams that track which code is AI-assisted versus human-written can measure trust over time. A simple convention: AI-generated commits get a [ai] tag in the commit message. PR descriptions note what was AI-generated and what was reviewed/modified.

This creates accountability without slowing anyone down. Six months in, you can pull data on which developers review AI output carefully and which ones rubber-stamp it.

The Interview Filter That Changes Your Hiring

Founders and hiring managers are asking the wrong question. Asking "do you use AI coding tools?" in 2026 is like asking "do you use Stack Overflow?" in 2015. Everyone does.

The question that filters for the 29% who ship confidently:

"Walk me through your code review process for AI-generated code before it goes to production."

Candidates who have thought about this give you a real answer. They talk about their mental model, their checklist, what they look for. Candidates who have not thought about this give you a blank stare or a vague answer about "checking the output."

That blank stare is how that 55-point trust gap turns into your 2 AM incident.

Get the Full AI Code Review Framework

I posted the full 5-step review gate setup on LinkedIn — including the complete Cursor skill file, the PR checklist template, and how to roll this out across a team without slowing down shipping velocity.

Comment "Guide" on that post and I'll DM you the link directly.

Work With Me

I help engineering orgs adopt AI across their entire team — not just the code, but how product, support, and operations work too. If you want your org moving faster without growing headcount, let's talk.

Kris Chase

@krisrchase